Ignore Significance. Embrace Relevance!

Listen to the Audio Version here

“Although significance testing only dates back to the 1930s, when Fisher propagated it, and although it is logically flawed and clearly retards the growth of knowledge, social science researchers are enslaved by this very bad idea. Even now, with the American Statistical Association's explicit repudiation of the concept, researchers cling to it desperately.” Ed Rigdon, professor at Georgia State University, in a recent LinkedIN post.

Wow. That's a big statement. Can it be true? Market researchers and data scientists cling desperately to the concept of "significance"!

When looking at market research and analysis results in business, two questions often arise: Is it representative and is it significant? What people want to know is "does it apply to everyone" and "is the effect large"?

If we ignore the question of representativeness for a moment and focus on significance, the usual answer - in both science and business - is to quote the p-value. This is also known as significance level or probability of error. At 0.01, the p-value is really good, and in practice, even 0.1 is sometimes accepted as sufficient. Significance is used to distinguish between right and wrong.

“Significance is used to judge between right and wrong.“

Let's take a look at the official statement from the American Statistical Association on the p-value:

Principle 1: P-values can indicate whether data are inconsistent with a particular statistical model.

Principle 2: P-values do not measure the probability that the hypothesis being tested is true or the probability that the data occurred by chance alone.

Principle 3: Scientific conclusions or policy decisions should not be based solely on whether a p-value exceeds a certain threshold.

Principle 4: Proper conclusions require full reporting and transparency.

Principle 5: A p-value or statistical significance is not a measure of the magnitude of an effect or the importance of a finding.

Principle 6: A p-value alone is not a good measure of the power of a model or hypothesis.

There now seems to be a scientific consensus that significance values are not very useful, if not dangerous.

Significance can be created at will

Language is a poor guide. We all know sentences like "Mr. Y had a significant impact on X". What is meant here is "great impact" or "important impact". But just because something is statistically significant does not mean that it must be important. On the contrary, something that is statistically significant can be very small and irrelevant.

In market research jargon, significant means "proven to be true". In science, the term "p-hacking" has emerged. Models and hypotheses are changed and data selected until the P-value is below the desired threshold. P-hacking is common in science. Guess how it is in market research! What do you think?

Significance has nothing to do with relevance

In practice, almost any correlation becomes significant if the sample is large enough. Significance does not measure the strength of a correlation, but whether it can be assumed to be true or present, assuming models IMPLICIT assumption are true.

A strong correlation usually requires a smaller sample to become significant. This phenomenon often leads to the misconception that significance also measures relevance. This is not the case. Every Bavarian is a German, but not every German is a Bavarian.

Significance has nothing to do with relevance. The misunderstanding arises from the fact that significant, important effects often have a better p-value than effects that are only supposed to be significant. On the other hand, any supposed effect only needs a sample large enough to be significant. Thus, a minimal effect can be significant. But all this is clear to many market researchers. The real problem lies elsewhere:

Significance in itself is not meaningful

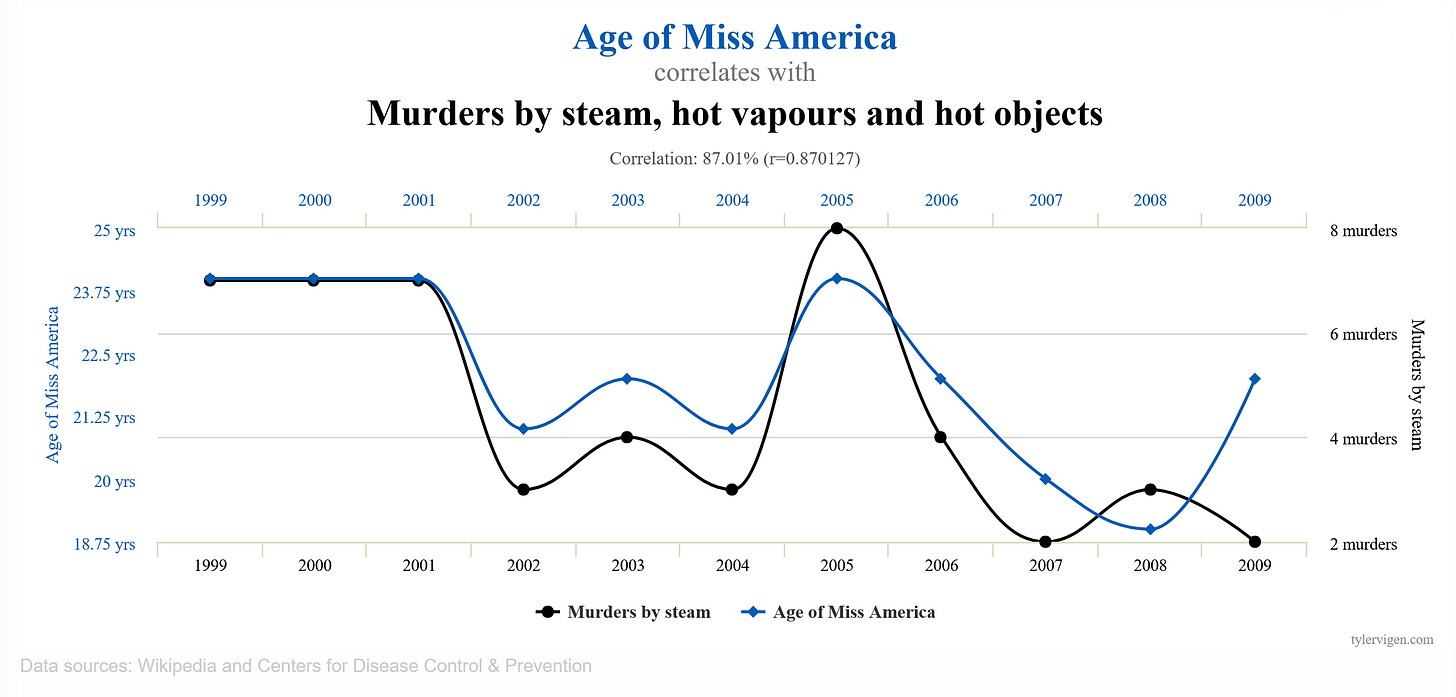

We have all seen the outrageous examples of correlation, such as that between the "age of the Miss America of a given year" and the "number of murders with steam or other hot objects". With N=8 years, this statistic already has a P-value of 0.001.

There is no clearer example of how inappropriate the P-value is for testing a correlation for truth. But why is it still used to judge right and wrong? The answer is varied.

Some will say, "The client wants it that way". But the more relevant question is: How do I know if a correlation is trustworthy?

To answer that, we should clarify what the p-value is trying to judge: the p-value is trying to judge a) differences between facts OR b) the existence of a causal relationship.

Questions about differences are: "Are more customers buying product X or Y?" The result of a survey shows two outcomes that are then compared. A comparison using a significance test is limited when

1. the representativeness is limited.

If a smartphone brand surveys only young people, the result will not reflect the population as a whole because older people have different needs. If the sample does not represent the population well, the results will not be accurate. However, only the characteristics of the people that have an impact (see correlation) on the measurement result are relevant. This point is often overlooked. It is common to quote only on the basis of age and gender without checking whether these are the relevant factors for representativeness.

2. the measurement is distorted.

I can ask consumers, "Would you buy this phone?" But whether the answer is true (i.e., undistorted) is another matter. A central focus of marketing research in recent years has been the development of valid scales. More recently, implicit measures have been added. The art of questionnaire design is one of them.

On the other hand, questions about relationships are: "Do customers in the target group buy my product more than other target groups?" The assumption is that the target group characteristic is the cause of the purchase. A relationship is assumed between consumer characteristics and willingness to buy. It is no longer just a matter of showing the difference between target groups, because that would be the same as a correlation analysis, which, as the example above shows, is a poor form of analysis for relationships. In my opinion, the question of relationships is not discussed enough, although it is of particular importance.

The Evidence Score

The vague term "relationship" refers to something very specific: a causal relationship. All business decisions are based on it. They are based on assumptions about causality. "If I do X, Y will happen". Discovering, exploring, and validating these "relationships" is what most market research is about (consciously or unconsciously).

But whether we can trust a statement about a relationship is determined by the product of the following three criteria:

Completeness (C for complete): How many other possible causes and conditions are there that also affect the target variable but have not yet been considered in the analysis? This can be expressed as a subjective probability (a priori probability in the Bayesian sense): 0.8 for "reasonably complete", more likely 0.2 for "actually most are missing", or 0.5 "the most important are included".

But why is completeness so important? One example: Shoe size has some predictive power for career success because, for various reasons, men tend to climb the corporate ladder higher on average and have larger feet. If gender is not included in the analysis, there is a high risk of falling for spurious effects. In causal research, this is known as the "confounder problem. Confounders are unaccounted for variables that affect both cause and effect. Even today, most driver models are calculated with "only a handful" of variables, which greatly increases the risk of spurious results.

The issue of representativeness is logically related to completeness. Either you ensure a representative sample (which is more or less impossible) and control for confounding factors, or you measure the factors that influence the relationships to be measured (demographics, types of buyers, etc.) and integrate them into the multivariate analysis of the relationships.

Correct direction of effect (D - Directed correctly): How certain can we be that A is the cause of B and not the other way around? In many cases, you can rely on prior knowledge, or you may have longitudinal data. Otherwise, statistical methods of "d-separation" (e.g., PC algorithm) must be applied. So again, the question is how subjective is the probability: more like 0.9 for "well, that's well documented" or 0.5 for "well, that could be either way"?

Predictive power (P - Prognostic): How much variance in the affected variable is explained by the cause? Effect size measures the absolute amount of variance explained by a variable. In his research, Nobel Laureate G. Granger once stated: In a complete (C), correctly specified (D) model, the explanatory power of a variable proves its direct causal influence. The predictive power should be assessed by a Causal AI algorithm, because only those are optimized to look for causal drivers instead of just indicators.

If one of the three variables C, D, or P is missing, the evidence for the relationship is very weak. This is because all three are interdependent. Predictive power without completeness or direction is worthless.

Mathematically, all three values can be combined multiplicatively. If one is small, the product is very small:

Evidence = C x D x P

This evidence is a proven tool in Bayesian information theory and at the same time a practical and useful value for judging a relationship.

Moving beyond black-and-white thinking

If the Evidence is high, then, yes, you can ask again: what is the significance of the relationship? But this is not about reaching a threshold, because the P-value cannot be the only criterion for accepting a result. The P-value, as a continuous measure, is more informative and tells us how stable the statement is. Nothing more.

I'm back on LinkedIN and I read another post by Ed Rigdon presenting his paper on the subject. He writes:

“How about just treating P-values as the continuous quantities they are. Don't let an arbitrary threshold turn your P-value into something else, and don't misinterpret it. And while you're at it, remember that your statistical analysis probably overlooked a lot of uncertainties, which means your confidence intervals are probably much too narrow.”

The next time a customer asks me what the P-value is, I'm going to tell them, "I don't recommend looking at the P-value as a confidence check. We measure the evidence score these days. Ours is 0.5, and the last model you used to make a high significance decision was only 0.2".

The concept of evidence is certainly not as convenient as significance. But I didn't say that truth was easy to come by. Imagine if your entire organization started to wake up from the "significance illusion," started to think more holistically. It would simply make better decisions that have greater impact.

That is how you 10x your impact.

REFERENCES

[1] “How improper dichotomization and the misrepresentation of uncertainty undermine social science research”, Edgar Rigdon, Journal of Business Research, Volume 165, October 2023, 114086

[2] “The American Statistical Association statement on P-values explained”, Lakshmi Narayana Yaddanapudi, www.ncbi.nlm.nih.gov/pmc/articles/PMC5187603/